Core & Satellites: A Pragmatic Architecture for AI, No-Code, and Pro-Code

Working with new tools in complex systems

The Wrong Debate

The current discussion is broken.

AI vs. no-code vs. “real” code - framed as a replacement battle. As if one will win and the others will die. As if we’re picking sides in a religious war.

This is a category error.

These are not ideological camps. They’re tools with different effort-to-complexity curves. And if you’re arguing about which one is “the future,” you’ve already lost the plot.

The interesting question isn’t which tool wins. It’s where each tool belongs.

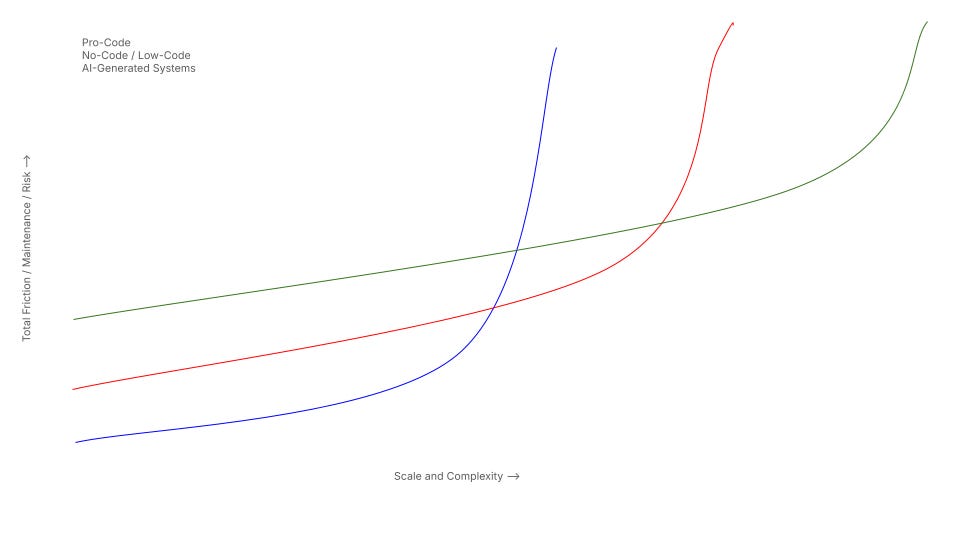

The Effort-Complexity Curve

Let’s build a simple model. X-axis: complexity. Y-axis: cost and effort.

Now draw three curves.

AI-generated systems start with the lowest entry cost. Minimal skill barrier. Absurdly fast for simple artifacts - websites, forms, automations. Things like Lovable or prompting your way with Claude Code without ever actually opening an IDE falls into this category. But the logic is opaque. And the exponential failure point appears early. Add scale, add interdependencies, and watch it buckle.

No-code sits in the middle. Higher entry cost than AI (licenses, learning curves). But more deterministic. More transparent. Handles structured workflows better but you might run into limiting constraints every now and then. The exponential scaling pain occurs later - but it still occurs. At FINN we had Airtable as a case study: brilliant until your data architecture wasn’t designed for growth nor for portability, and suddenly you’re rebuilding everything.

Pro-code has the highest initial cost. Expertise, salaries, time. But you get full control over architecture. Highest resilience under scale. The exponential complexity breakpoint sits furthest to the right.

Here’s the insight nobody wants to accept: each approach dominates in a specific complexity range. Not everywhere. Not forever. In a range.

The Architectural Mistakes

Two extremes dominate the discussions around these. Both are wrong.

The Pro-Code Purist

The assumption: every system must belong to the core.

The consequence: everything gets treated as mission-critical. Every small workflow becomes architectural overhead. Every request goes into the backlog. Innovation dies in sprint planning meetings.

These teams ignore modular boundaries. They build cathedrals when they need tents. And they wonder why the business keeps complaining about speed.

The AI / No-Code Maximalist

The assumption: everything can be built with AI or no-code. Just wire it up. Ship fast. Iterate.

The consequence: scaling fragility. Hidden technical debt. Opaque logic under load. Data inconsistencies that nobody can trace. Security risks that nobody understands until the audit.

These teams underestimate B2B complexity accumulation. They build tents when they need foundations. And they wonder why everything breaks at scale.

But here’s the contradiction: both camps are partially right. Not every system needs to be mission-critical. And not every workflow should be handed to engineers. The question is how to separate them.

The Core & Satellite Model

Think of it as planetary architecture.

The “core” is the planet. The “satellites” are moons. Gravitationally attatched to the core but independent.

The Core (Should be Pro-Code)

The core handles:

Data integrity

Authentication and authorization

Security

Validation logic

Scalability

Auditability

Core APIs

The core absorbs high load, cross-system dependencies, and mission-critical workflows. It doesn’t move fast. It moves right.

The core must be engineered. Full stop. No discussions.

The Satellites (Should be AI / No-Code)

The satellites are different. Lightweight. Independent. Loosely coupled. Replaceable. At best user-maintained.

Examples:

Internal tools

Micro-automations

Landing pages

One-page apps

Workflow-specific dashboards

Temporary experiments

They connect to the core via APIs, webhooks, MCP-style interfaces, validated endpoints. They consume systemic truth. They don’t own it.

Satellites are allowed to fail. That’s the point.

Why This Model Works

Risk Containment

If a satellite fails, it doesn’t collapse the planet. You can cut it off. Users complain, you rebuild it, life goes on.

If the core fails, everything collapses.

Therefore: stability lives at the center, experimentation lives at the edge.

This isn’t just architecture. It’s organizational risk management.

Cultural Leverage

The real unlock: empower staff to build satellites.

Give them access to clean APIs. Give them clear governance rules. Give them guardrails around data access. Give them a defined escalation path when something needs to migrate to the core. Finally, give them the tools of your choosing, and let them build. I’ve seen this work at multiple companies now (FINN & Jobvalley), and the results are convincing.

What you get: increased autonomy, faster local optimization, reduced bottlenecks.

What you avoid: the engineering team becoming a bottleneck for every internal tool request.

Central reliability. Distributed innovation. That’s the trade.

Complexity Management

The key mistake most organizations (especially enterprises) make: treating all software as core software.

Small tools don’t require full scalability guarantees. They don’t need enterprise-grade lifecycle management. They don’t need heavy governance.

Overengineering small use cases destroys speed. Underengineering core systems destroys companies.

This model separates the two. On purpose.

The Transition Threshold

Over time, some satellites grow.

Usage increases. Dependencies multiply. Revenue depends on it. Compliance risk rises.

At some point, the satellite migrates inward. It becomes core.

This creates a natural evolution path: Experiment → Validate → Stabilize → Institutionalize.

Not everything starts as infrastructure. But some things become infrastructure. The model accounts for that.

What Large Organizations Underestimate

Tools alone are insufficient.

You need:

Core APIs with validation

Clear data ownership

Permission architecture

Monitoring

Defined integration standards

Without this infrastructure, AI tools stall. AI-Tools become shadow IT. Security blocks everything. And the organization ends up worse than before - more tools, more chaos, less control.

The bottleneck is not tooling. It’s architecture.

The uncomfortable truth: most organizations want the benefits of distributed innovation without investing in the enabling infrastructure. They want moons without building the planet first.

Strategic Positioning

Here’s where I land:

AI is the lowest-friction entry layer. Fast experiments. Disposable artifacts. Rapid prototyping.

No-code extends structured autonomy. Workflows that need to persist but don’t need to scale globally (even though there are tools like Xano which seem to scale quite nicely).

Pro-code absorbs systemic complexity. The stuff that can’t fail.

None replaces the others. They coexist.

The intelligent organization:

Designs a hardened core

Exposes clean interfaces

Enables controlled chaos at the edges

Accepts replaceability of satellites

Protects irreversibility in the core

Questions Worth Sitting With

1. What’s currently in your “core” that should be a satellite? What’s a satellite that’s quietly becoming load-bearing?

2. Is your engineering team a bottleneck because they’re protecting quality - or because you haven’t built the interfaces that would make satellites safe?

3. When a no-code tool fails at scale, do you migrate it inward or patch it forever?

4. Are you debating AI vs. no-code vs. pro-code ideologically - or asking where each belongs on the complexity curve?

5. What would it take to give your staff permission to build at the edge - without creating security nightmares?

---

The debate isn’t which tool wins.

The real question: where does each tool belong on the complexity curve?

Stability at the center. Speed at the edge. Interfaces in between.

Your move.